Photo: 123rf

New advances in facial recognition technology are challenging the prevailing view that it is racist.

This comes as the public sector in New Zealand undertakes a check for bias in its own biometrics technologies, and also as it has gone to facial recognition companies asking what improvements could be made to the tech.

A recent breakthrough in US universities has been training FR (facial recognition) on synthetic - not real - AI-generated facial images, with tests returning far more accurate results.

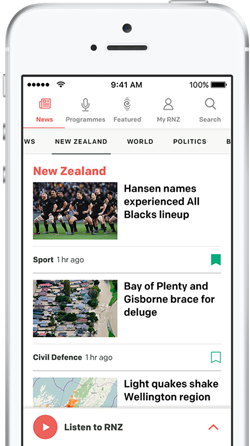

The Department of Internal Affairs [https://www.rnz.co.nz/news/national/502926/racial-bias-expected-in-government-s-new-identity-check

launched Identity Check] a few months ago without its possible racial bias being fully tested, though it appeared pretty accurate, and a Māori reference group said it was "comfortable" the technology would catch up.

DIA told RNZ on Friday it now had a globally recognised lab checking Identity Check's liveness biometrics for bias, which would return results in a few months.

"We want to know more about fairness," it told RNZ on Friday.

"We are committed to the ethical and responsible use of FR."

The department checks photos people take of themselves and submit on smartphones to issue passports and do online identity verification. DIA and Customs have some of the busiest facial recognition systems in the country.

It last week issued a tender to get tech companies to tell it how it could improve its capture of deep fakes and other fraud, using what is called "liveness" tech.

"Fraud and threats in our environment are ever-present," DIA told RNZ, noting deep fakes of facial images were a threat.

"We need to keep adjusting our technologies to stay ahead of this."

The tender asks companies to describe how their systems perform around false acceptance and false rejection rates and bias.

MSD also uses Identity Check if beneficiaries choose that way of identifying themselves, online in real time.

The system is a form of one-to-one photo matching, as opposed to the less accurate one-to-many matching.

A decade ago, facial recognition sparked controversy worldwide over misidentifying black and brown faces much more than white faces, and especially for women - some systems showed a racial or gender bias of four percent. Many algorithms were 10 to 100 times more likely to inaccurately identify a photo that was not of a white face, it was reported in 2019.

But now some systems are down to 0.02 percent inaccuracy, benchmark testing by the US National Institute of Standards and Technology (NIST) shows.

The racist perception persisted, though it was "wrong", said a US thinktank, Lawfare.

"For all the intense press and academic focus on the risk of bias in algorithmic face recognition, it turns out to be a tool that is very good and getting better," it said.

At New York University, researchers generated 13 million synthetic images of faces from six major racial groups - Black, Indian, Asian, Hispanic, Middle Eastern and White - to train three types of facial recognition.

"The result not only boosted overall accuracy compared to models trained on existing imbalanced datasets, but also significantly reduced demographic bias," they reported in April.

They have open-sourced their code for others to use.

"Facial recognition technology continues to get better with time, with improved camera technologies, more diverse datasets," said facial recognition bias expert Ifeoma Nwogu at the University at Buffalo.

Whatever its accuracy, the debate remains fierce about its spread, and over whether or how to use facial recognition in public spaces.